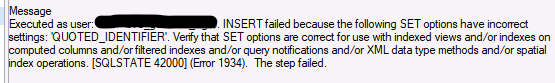

QUOTED_IDENTIFIER Set Incorrectly?!

So What Is Going On?

I recently ran into an issue when I set up a job to collect information from an extended event. I wanted to write that to a table in my scratch DBA database. This allowed the customer and I to slice and dice data with ease. This seemed easy enough. I am no stranger to creating these tables and pushing information to them via SQL Server Agent jobs. My job was failing though with the error below saying I have the incorrect SET option for QUOTED_IDENTIFIER.

Backing Up A Step, What Is QUOTED_IDENTIFIER?

Set to ON by default, QUOTED_IDENTIFIER allows use any word as an object identifier so long as it is delimited by quotes (“) or brackets ([]). If set to OFF, restricted keywords can’t be used as an identifier (such as name, description, etc). There are a few cases where you need to have QUOTED_IDENTIFIER set to ON, but the one we are going to focus on for this blog is “SET QUOTED_IDENTIFIER must be ON when you invoke XML data type methods.” Extended event data is stored in XEL files (which is just a variant of XML), so QUOTED_IDENTIFIER must be set to ON.

Back To The Investigation!

So the setting is ON by default but best to not assume, I’d hate to make an ASS out of U and ME. There are a couple ways to check to make sure your setting is on for your target table. The easiest way to find out is right clicking the table and going to the properties. You will see the SET options under the Options section. You can also script the table to see the SET option for QUOTED_IDENTIFIER.

The configuration is correct, but we still receive the same error. I tried dropping and recreating the table a couple of times but it didn’t fix the issue. In a swing for the fences effort, I tried to explicitly call out the SET operation. Different articles in my research called it out before statements as they wanted to show examples of using the setting. I set QUOTED_IDENTIFIER to ON in-line on the SQL Server Agent job code right below table creation and setting variables but before the INSERT statement. The below code would allow you to create a table if it doesn’t exist, delete data that is older than 30 days, and insert new items into the table.

IF NOT EXISTS (SELECT 1 FROM DBA_Admin.sys.objects WHERE name = 'TestTable')

BEGIN

CREATE TABLE DBA_Admin.dbo.TestTable

(

[ts] [datetime],

[event_name] [nvarchar](256),

[username] [nvarchar](1000),

[client_hostname] [nvarchar](1000),

[client_app_name] [nvarchar](1000),

[database_name] [nvarchar](300),

[sql] [nvarchar](max)

)

END

select min(ts), max(ts) from DBA_Admin.dbo.TestTable

DELETE FROM DBA_Admin.dbo.TestTable

WHERE ts < DATEADD(day,-30,GETDATE())

DECLARE @MaxDate DATETIME

SELECT @MaxDate = MAX(ts)

FROM DBA_Admin.dbo.TestTable

SELECT CAST(event_data as xml) AS event_data

INTO #cte

FROM sys.fn_xe_file_target_read_file('ExtendedEventName*.xel', null, null, null)

SET QUOTED_IDENTIFIER ON

INSERT INTO DBA_Admin.dbo.TestTable

SELECT ts = event_data.value(N'(event/@timestamp)[1]', N'datetime')

,event_name = event_data.value(N'(event/@name)[1]', N'nvarchar(256)')

,[username] = event_data.value(N'(event/action[@name="username"]/value)[1]', N'nvarchar(1000)')

,[client_hostname] = event_data.value(N'(event/action[@name="client_hostname"]/value)[1]', N'nvarchar(1000)')

,[client_app_name] = event_data.value(N'(event/action[@name="client_app_name"]/value)[1]', N'nvarchar(1000)')

,[database_name] = event_data.value(N'(event/action[@name="database_name"]/value)[1]', N'nvarchar(300)')

,[sql] =

CASE

WHEN event_data.value(N'(event/data[@name="statement"]/value)[1]', N'nvarchar(max)') IS NULL

THEN event_data.value(N'(event/data[@name="batch_text"]/value)[1]', N'nvarchar(max)')

ELSE event_data.value(N'(event/data[@name="statement"]/value)[1]', N'nvarchar(max)')

END

FROM #cte

CROSS APPLY #cte.event_data.nodes(N'/event') AS x(ed)

WHERE event_data.value(N'(event/@timestamp)[1]', N'datetime') > @MaxDateConclusion

This issue was a testament to not giving up on difficult troubleshooting. You need to dot all of the I’s and cross all T’s and not throw away an idea before trying it. I could not find an article anywhere where someone had my exact problem. Every article was showing things at a more basic level of someone having the setting OFF instead of ON. I hope this helps someone else and saves them the hours of a headache! If you have questions or even an explanation for why I experienced this issue, I would love to hear from you!

Tuning Basics – Updating an Update

You are out doing your scheduled sweeps of the environment and you notice a huge I/O consumer is one of your update procedures. Your initial thought may be to panic because how can you test data that is changing? You have several methods to tuning queries that adjust data that allow data to revert between tests.

NOTE: We always recommend tuning queries in non-production until we have our final tested solution to implement in production.

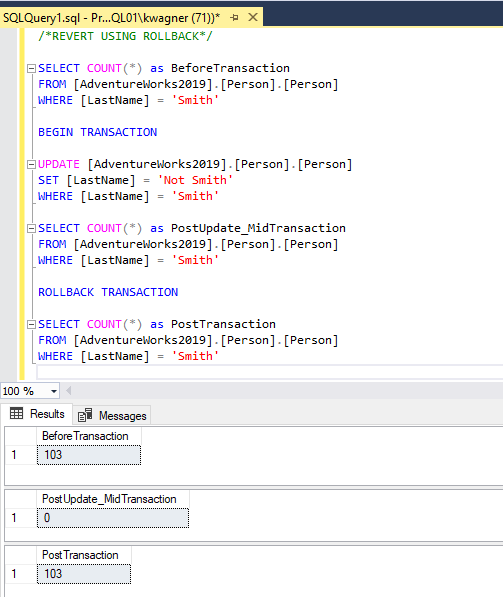

Tuning with Rollback Transaction

One of the more basic methods for executing queries without changing data is to wrap the statements are you running in a BEGIN TRANSACTION and ROLLBACK TRANSACTION. This allows everything to execute and give you the performance statistics you are looking for, while leaving the data intact to allow for repeat testing.

Tuning using Database Backups

You have a couple options with your standard SQL Server backups to assist in reverting changes made to the testing environment and the same concepts are applied to your basic DR strategy. If the test is short, you can take a full backup before making a change and restore that backup to restart a test.

If you are making a series of changes over a period of time and don’t want to completely restart your test, you can utilize point in time recovery. Once your database is in full recovery, you can start with a full backup and take log backups at set intervals or before different pieces of your change. This allows you to restore to a specific point in your test.

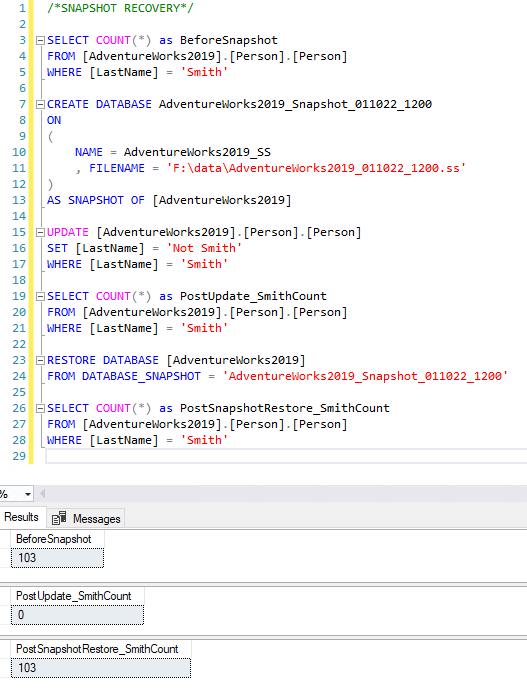

Tuning Database Snapshot

Database Snapshots are a great way to quickly revert large databases to an initial state. Database Snapshots keep a record of database pages as they change and the copy of the page so those extents can be reverted instead of restoring the whole database. Therefore, they require the associated source database as it is not a full copy of the database. Snapshots contain the original version of files of the source database and a pointer file that shows the changes since the snapshot was taken, called a sparse file. The source database must be available for snapshots to be usable. This makes them not an ideal DR solution, but great for analysis in auditing and in tuning situations.

Summary

There are many ways to quickly get back to your original state to allow for quick repetitive testing for queries that change data. A huge benefit of the methods I listed today allow you to also document where transactions and backups occur so you can open a .sql file and have all the needed steps in one document. Do you like other ways to revert changes? Maybe temporal tables? I’d be happy to cover that in a post in the near future. Tell me all about your methods in the comments below!